How to Optimize REST APIs for Faster Response Times

Learn how to optimize REST APIs for faster response times with practical strategies, beginner-friendly examples, expert advice, and useful resources to improve performance and user experience.

TECHNOLOGY

4/29/202612 min read

In the world of modern web and mobile applications, speed is everything. Users expect websites and apps to respond instantly. Whether someone is shopping online, booking a cab, scrolling social media, or using a banking app, every second matters. If an application takes too long to load or process data, users quickly lose patience and may leave for a competitor. This is where REST APIs become critical.

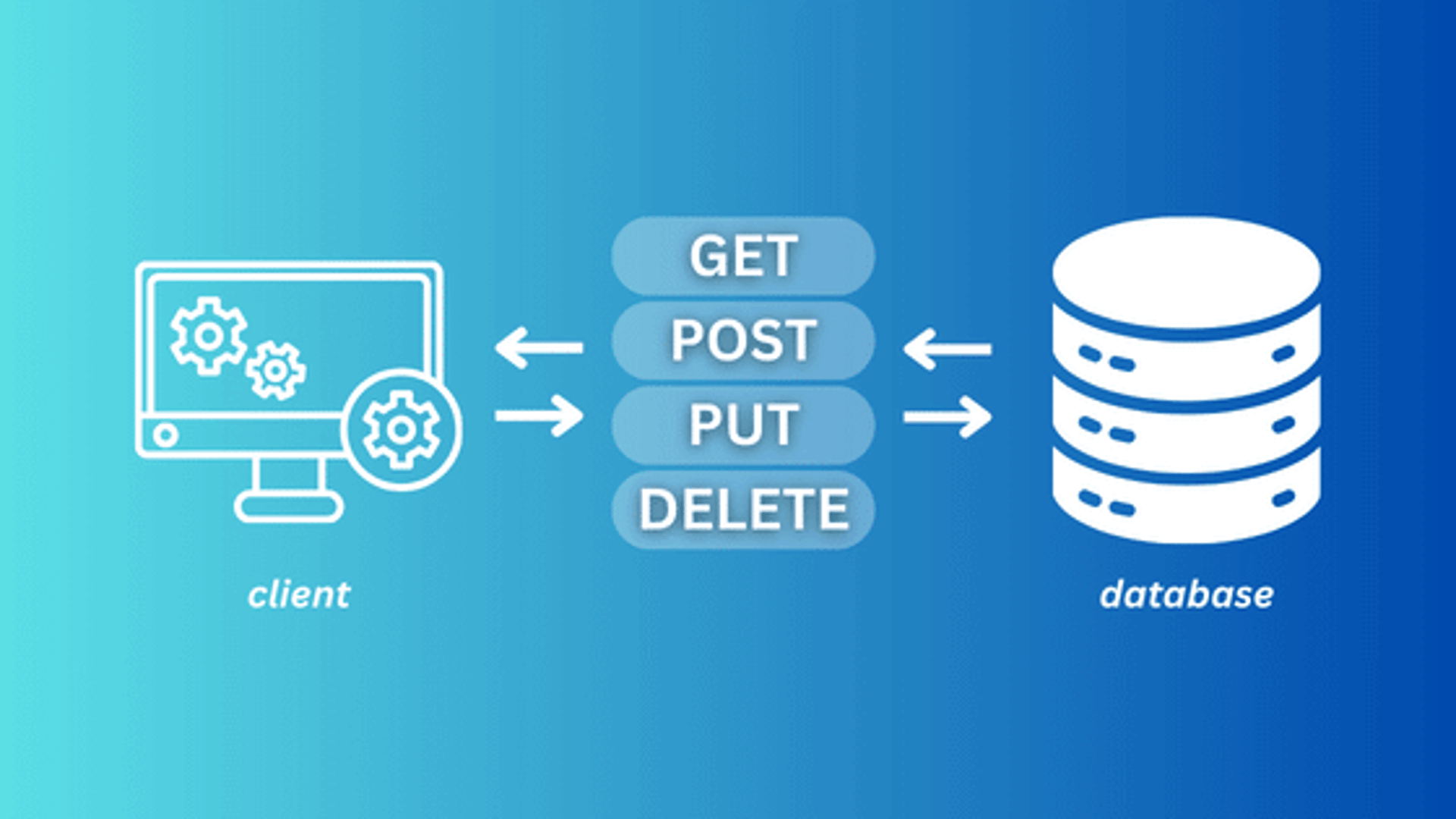

REST APIs or Representational State Transfer Application Programming Interfaces act as the communication bridge between the frontend and backend of an application. They handle requests, fetch data, process information, and deliver responses. From displaying product listings on an eCommerce site to loading user profiles in a mobile app, REST APIs are constantly working in the background.

However, if your REST APIs are slow, the entire application suffers. Slow response times can frustrate users, increase bounce rates, hurt conversions, and even affect your business revenue. On the other hand, optimized REST APIs improve speed, scalability, and overall user satisfaction.

In this guide, we’ll explore how to optimize REST APIs for faster response times with simple examples, expert advice, and useful resources that both beginners and experienced developers can understand.

What Is The Difference Between REST and REST APIs

Before learning how to optimize REST APIs, it’s important to understand what REST actually means and how it differs from REST APIs. REST stands for Representational State Transfer. It is not a tool, framework, or programming language. Instead, REST is an architectural style a set of guidelines and principles used for designing network-based applications. It was created to make communication between systems simple, scalable, and efficient over the web.

You can think of REST as a rulebook or blueprint that explains how systems should communicate with each other.

REST is based on several important principles. One of the main principles is stateless communication, which means every request sent by the client must contain all the information the server needs to process it. The server does not store information about previous requests.

Another principle is client-server architecture. This means the frontend (what users see) and the backend (where data is processed and stored) are separated, making development more flexible and manageable.

REST also supports cacheable responses. This allows responses to be stored temporarily so repeated requests can be served faster, improving speed and performance.

A uniform interface is another key principle. APIs should have consistent URL structures, methods, and responses so developers can easily understand and use them.

On the other hand, REST APIs are actual application programming interfaces built by following REST principles. They are the practical implementation of the REST architecture.

In simple terms, if REST is the blueprint, REST APIs are the real buildings created using that blueprint.

For example, imagine you have an online shopping website. REST defines the communication rules between the website, mobile app, and server. REST APIs are the specific endpoints used to perform actions.

Examples of REST API endpoints include:

GET /products – to fetch product details

POST /orders – to create a new order

PUT /profile – to update user profile information

DELETE /cart-item – to remove an item from the shopping cart

These endpoints allow applications to perform CRUD operations - Create, Read, Update, and Delete efficiently.

So, the main difference is simple: REST is the architectural style or set of principles, while REST APIs are the actual web services developed using those principles. Understanding this difference makes it easier to learn how to design and optimize REST APIs for better speed, scalability, and user experience.

Why REST APIs Performance Matters

REST API performance is crucial because it directly affects user experience and business success. Imagine ordering food online and waiting 10 seconds just to get an order confirmation. That delay feels frustrating and may even make you leave the app. In many cases, such delays happen because the app relies on slow REST APIs to communicate with the server.

REST APIs act as the bridge between the frontend and backend. Every action like logging in, searching for products, or placing an order depends on APIs. If they are slow, the entire website or app feels slow and unreliable.

Poor-performing REST APIs can lead to slow page loads, app crashes, request timeouts, poor user experience, higher server costs, and lower customer retention. Users today expect fast responses, and even small delays can push them toward competitors.

On the other hand, fast REST APIs improve user satisfaction, scalability, reliability, and conversion rates. According to industry studies, even a 1-second delay can significantly reduce customer satisfaction and sales. That’s why optimizing REST APIs is important for both technical performance and business growth.

Use Caching To Speed Up REST APIs

Caching is one of the most effective ways to improve the speed and performance of REST APIs. It works by temporarily storing frequently requested data so the server doesn’t need to process the same request over and over again. Instead of fetching data from the database every time, the API can quickly return the stored version, reducing response time significantly.

For example, imagine an online store where thousands of users request the same “Best Sellers” product list every minute. Without caching, the server would need to query the database for each request, which increases load and slows down performance. With caching, the product list is stored in memory and can be delivered instantly to users, making the experience much faster and smoother.

There are several types of caching used in REST APIs. Browser caching stores responses on the user’s device, so repeated requests don’t always reach the server. Server-side caching stores data on the server for quick access. CDN caching uses a Content Delivery Network to store and deliver content from servers closer to the user’s location. Database query caching stores the results of common database queries to reduce repeated processing.

Popular tools for caching include Redis, Memcached, and Cloudflare CDN. Redis is especially useful for storing session data, product listings, and user profiles for quick retrieval.

By using caching effectively, businesses can reduce database load, lower server costs, and dramatically improve API response times, resulting in a faster and more reliable user experience.

Implement Pagination For Large Datasets

Another common performance issue in REST APIs occurs when too much data is returned in a single request. If an API tries to fetch thousands of records at once, it increases the response size and slows down processing time. This can lead to delays, higher server load, and a poor user experience.

For example, imagine a blog website with 10,000 articles. Instead of returning all articles in one request, it is much more efficient to use pagination. A request like GET /articles?page=1&limit=10 fetches only ten records at a time. This makes the response lighter and faster, allowing users to load content quickly without overwhelming the server.

Pagination reduces payload size, improves speed, and enhances the overall user experience. It is commonly used in social media feeds, eCommerce stores, and content-heavy websites where large amounts of data need to be displayed gradually. Pagination can also be combined with filtering and sorting so users can quickly find the exact data they need while maintaining performance.

Compress API Responses

Another effective way to improve REST API performance is by compressing API responses. Large API responses take more time to travel over the internet, especially for users with slower network connections. Compression reduces the size of the response before it is sent to the client, making data transfer much faster.

For instance, a JSON response of 1MB can often be compressed to under 200KB using tools like Gzip or Brotli. This significantly reduces bandwidth usage and improves loading times. In technologies like Node.js, compression can be enabled easily with middleware using code like app.use(compression());. Even a small change like this can create a noticeable improvement in API performance.

Another critical step in optimizing REST APIs is optimizing database queries. Since APIs often rely on databases to fetch and update information, slow or inefficient queries can become a major bottleneck. Using indexing, selecting only required fields, avoiding unnecessary joins, and caching query results can help reduce query execution time and improve overall API speed.

Optimize Database Queries

A slow database often causes slow REST APIs, no matter how powerful the server is. Inefficient database queries can create bottlenecks that delay responses and increase server load.

For example, instead of using a query like SELECT * FROM users;, it is more efficient to request only the required fields, such as SELECT id, name, email FROM users;. Fetching only necessary data reduces processing time, database workload, and bandwidth usage.

Other effective database optimization methods include using indexes, avoiding unnecessary joins, and reducing duplicate queries. Indexes help databases locate data faster, just like an index in a book helps readers quickly find specific topics. Without proper indexing, the database may need to scan every row, which takes more time.

Tools like MySQL Slow Query Log, PostgreSQL EXPLAIN, and New Relic can help developers identify slow queries and performance bottlenecks.

Think of your database as a library. Without an index, finding a specific book can take a long time because you need to search shelf by shelf. With proper indexing, you can locate the book almost instantly. The same principle applies to databases better organization leads to faster retrieval and better API performance.

Use Asynchronous Processing

Not every request sent to a REST API needs to be completed instantly. Some operations are time-consuming and can slow down the system if they are handled in real time. When these heavy tasks are processed synchronously, users may face delays, slower response times, or even request timeouts.

Tasks such as sending confirmation emails, creating reports, processing videos, resizing images, or managing large file uploads often require extra time and computing power. If the server waits to finish these tasks before replying, the overall performance of the API can suffer.

A smarter solution is asynchronous processing. With this method, the API sends an immediate response to the user while the actual task continues in the background. For example, when a user uploads a file, the API can quickly confirm that the upload was received, and a background worker can process the file afterward. This improves speed and creates a smoother user experience.

To manage background tasks efficiently, developers often use tools like RabbitMQ, Apache Kafka, BullMQ, and Celery. These tools help organize and process jobs without affecting the API’s immediate performance.

By moving resource-heavy tasks to the background, REST APIs remain fast, responsive, and capable of handling more users without sacrificing reliability.

Reduce the Number of APIs Calls

Every API request introduces some level of network delay, even if it’s small. When multiple requests are made back-to-back, this latency adds up and can slow down the overall performance of an application. Reducing the number of API calls is therefore an effective way to improve speed and responsiveness.

For example, instead of sending three separate requests like GET /user, GET /orders, and GET /notifications, you can combine them into a single request such as GET /dashboard. This approach reduces the number of round trips between the client and the server, allowing data to be fetched more efficiently in one go.

Minimizing API calls not only speeds up response times but also reduces server load and network usage. This technique is especially beneficial for mobile applications, where internet connectivity may be slower or unstable. By consolidating requests, applications can deliver a smoother and faster user experience even on limited networks.

Use Load Balancing For Scalability

As your application grows and attracts more users, relying on a single server can quickly become a limitation. One server handling all incoming requests may lead to slow responses, downtime, or even system crashes during high traffic periods. This is where load balancing becomes essential for maintaining performance and scalability.

Load balancing works by distributing incoming API requests across multiple servers instead of sending everything to just one. By spreading the workload evenly, it prevents any single server from becoming overloaded and ensures that requests are handled more efficiently. This leads to faster response times and a smoother experience for users.

There are several popular tools used for load balancing, including NGINX, HAProxy, and AWS Elastic Load Balancer. These tools help manage traffic distribution and ensure that applications can scale as demand increases.

Load balancing not only improves speed but also enhances availability and fault tolerance. For example, if one server goes down or crashes, the load balancer automatically redirects traffic to other active servers. This prevents service interruptions and keeps your REST APIs running smoothly even under heavy load.

By implementing load balancing, businesses can build more reliable, scalable, and high-performing applications that can handle growth without compromising user experience.

Use Modern Protocols Like HTTP/2 and HTTP/3

Traditional HTTP/1.1 handles requests in a more limited way, which can slow down communication between clients and servers especially when multiple requests are sent at once. As applications grow more complex, relying on older protocols can create unnecessary delays and reduce overall performance.

Modern protocols like HTTP/2 and HTTP/3 are designed to overcome these limitations. HTTP/2 introduces multiplexing, which allows multiple requests and responses to be sent over a single connection at the same time. This reduces the need to open multiple connections and significantly improves speed and efficiency.

HTTP/3 takes performance a step further by using QUIC, a transport protocol built on UDP. This reduces latency, improves connection reliability, and handles packet loss more effectively. As a result, users experience faster load times and smoother interactions, even on unstable networks.

For applications serving users across different regions, upgrading to HTTP/2 or HTTP/3 can make a noticeable difference in performance. It helps reduce delays, improves data transfer efficiency, and ensures a more responsive and reliable experience for users worldwide.

Monitor REST APIs Continuously

Optimizing REST APIs is not a one-time effort it requires continuous monitoring to ensure consistent performance. Even a well-optimized API can face issues over time due to increased traffic, server load, or unexpected errors. Without proper monitoring, these problems can go unnoticed until they start affecting users.

Continuous monitoring helps you track key performance metrics such as response time, error rates, throughput, and CPU usage. These metrics give you a clear picture of how your API is performing in real time. For instance, if your API response time suddenly increases from 100 milliseconds to 2 seconds, it’s a strong signal that something is wrong and needs immediate attention.

There are several tools available to help monitor API performance effectively, including Postman, Apache JMeter, Prometheus, Grafana, and Datadog. These tools can provide real-time insights, visual dashboards, and automated alerts.

By setting up alerts and tracking performance regularly, developers can quickly identify bottlenecks and fix issues before users even notice them. This proactive approach ensures that REST APIs remain fast, reliable, and capable of delivering a smooth user experience at all times.

Expert Advice for Beginners and Professionals

When working with REST APIs, your approach to optimization should depend on your experience level. If you’re just getting started, it’s best to focus on the fundamentals first. Techniques like caching, pagination, and optimizing database queries can deliver noticeable performance improvements without adding too much complexity. These basics form the foundation of a fast and reliable API.

For more experienced developers, advanced strategies can take performance to the next level. Implementing asynchronous processing, upgrading to modern protocols like HTTP/3, and using distributed caching systems can significantly enhance scalability and efficiency. These approaches are especially useful for handling high traffic and building large-scale applications.

No matter your level, it’s important to define clear performance goals. A commonly used benchmark is: response times under 100 milliseconds are considered excellent, between 100 and 300 milliseconds are good, and anything above 300 milliseconds usually indicates a need for improvement. Having these targets helps you measure progress and identify areas that need optimization.

Another key practice is implementing rate limiting. This controls how many requests a user can send within a specific time frame. For example, allowing 100 requests per minute per user can prevent server overload and protect your API from misuse or malicious attacks. By combining performance goals with safeguards like rate limiting, you can build REST APIs that are not only fast but also secure and reliable.

Useful Resources to Learn REST APIs Optimization

If you want to master REST API optimization, learning from trusted resources can make a big difference. These platforms and guides provide practical knowledge, real-world examples, and best practices to help you build faster and more efficient APIs.

The Google API Design Guide is a great starting point for understanding how to design clean, consistent, and scalable APIs. Similarly, the Microsoft REST API Guidelines offer detailed recommendations on structuring APIs, handling requests, and maintaining consistency across services.

For documentation and testing, Swagger (also known as OpenAPI Specification) is widely used by developers to design, document, and test APIs efficiently. To dive deeper into caching and performance, the Redis documentation is highly valuable, especially for learning how to store and retrieve data quickly.

If you prefer structured learning, platforms like freeCodeCamp offer free tutorials that are beginner-friendly and practical. For more in-depth courses, Udemy and Coursera provide a wide range of backend development and API-focused programs taught by industry experts.

By exploring these resources, you can strengthen your understanding of REST APIs, stay updated with industry practices, and build high-performing, scalable applications with confidence.

Conclusion

Optimizing REST APIs is essential for building fast, reliable, and scalable digital experiences. Every improvement whether it’s caching, compression, efficient database queries, or adopting modern protocols contributes to faster response times and smoother performance. Even small optimizations can create a noticeable difference in how users interact with your application.

You can think of API optimization like tuning a car. When a car is well-maintained, it runs more smoothly, performs better, and uses resources efficiently. In the same way, a well-optimized REST API delivers quicker responses, reduces server load, and improves overall system efficiency.

In today’s competitive digital landscape, speed is no longer optional it’s expected. Users prefer applications that respond instantly and work seamlessly. Slow performance can easily drive them toward alternatives.

That’s why investing time in optimizing your REST APIs is crucial. It not only enhances user satisfaction but also supports long-term growth by improving scalability and reducing operational costs. Start optimizing today to build applications that are fast, efficient, and truly user-friendly.

FAQs

1. What is the ideal response time for REST APIs?

Under 100ms is considered excellent.

2. How does caching help REST APIs?

It stores frequently requested data to reduce server processing time.

3. Which tools help monitor REST APIs?

Grafana, Prometheus, Datadog, and Postman are popular options.

4. Is HTTP/3 good for REST APIs?

Yes, it reduces latency and improves speed.

5. Can database optimization improve REST APIs?

Absolutely. Better queries and indexing improve performance significantly.

Community

Company

Resources

© 2024. All rights reserved.